Note: adapted from original article written by Agustin Bruzzese, Jordi Delgado, Pau Tallada, Gonzalo Merino, from Port d’Informació Científica (PIC) and High Energy Physics Institute (IFAE, Institut de Física d’Altes Energies). IFAE is a partner in ESCAPE project.

Prototype of MAGIC data at PIC: Development and application of an automatic workflow for the replication of scientific data

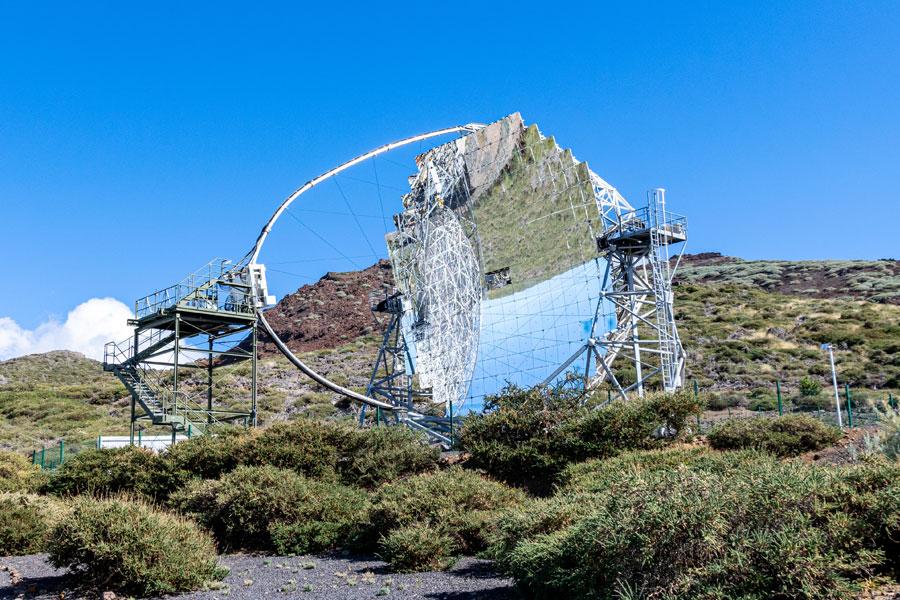

"The Major Atmospheric Gamma Imaging Cherenkov (MAGIC) Telescopes (one of the current gamma ray telescopes that serves as a predecessor for theCherenkov Telescope Array - CTA - one of the ESFRIs supported by ESCAPE)” are dedicated to the observation of gamma rays from galactic and extragalactic sources in the very high energy range (from 20 GeV to beyond 100 TeV). MAGIC data is replicated to a variety of Tier-1 or Tier-2 facilities, and to smaller Tier-3 or 4 facilities managed by partner institutions. Currently, Port d’Informació Científica (PIC) receives a huge amount of data from the MAGIC experiments, which in turn is distributed in real time to scientific data centers (also called datalake). However, the current orchestration of data transfers does not allow for fully automatic replication. Moreover, the storage from the MAGIC experiments holds a limited amount of space, which makes it necessary that there is a mechanism by which files are deleted at the source once they have been successfully transferred to their destination.

Thus, we would like to develop a suitable workflow to handle large data sets produced by the gamma ray telescope, and continuously stream these files to the datalake for permanent storage and access, while keeping free space in the data source center. For this, Rucio is an open-source software framework that provides scientific collaborations with the functionality to organize, manage, and access their data at scale. Also, Rucio can trigger the automatic deletion of files once they have been successfully replicated to its destination.

By means of Rucio, the following frame replication workflow has been developed (Fig. 1 see below). Due to the current restrictions established by the experimentalists in charge of the distribution data from MAGIC do not allow free access to the real data, from 5 up to 10 mock files are generated locally at the root base of the non-deterministic RSE (PIC-INJECT). As a result, it can be seen in Fig. 2.1 the increase from 5 to 10 every 30 min (blue line), corresponding to the creation of files in the RSE PIC-INJECT. Once these new files are generated, the script that initializes the automatic replication workflow activates. In the first place, those files will be registered in the Rucio catalog (through the command "add_replicas"). Consequently, in Fig. 2.2 you can see peaks corresponding to the number of records added to the Rucio catalog. Then, the script will proceed to organize and create the registered files according to the MAGIC namespace in the RUCIO collections (dataset and containers), and the replication rules are created pointing to the deterministic RSE (PIC-DCACHE)(Fig. 2.3). All the files of our current data samples will be organized in 8 main rules according to their namespace of MAGIC. For this reason, in Fig. 2.4 we see 8 rules organized as containers (blue).

To achieve the automatic deletion of files in PIC-INJECT, the creation of temporary rules was implemented, which were called carrier rules(Fig. 1). The intention is that once the data is successfully replicated to the PIC-DCACHE RSE, the files in the PIC-INJECT RSE are automatically deleted, thus conserving space in the origin RSE (Fig. 2.4). Herein, the creation of two datasets is seen in Fig. 2.4 (green), one which corresponds to a dataset that points to the PIC-INJECT, and another that points to PIC-DCACHE (Fig. 1). Shortly after, the line decreases when the content has been correctly replicated in the destination RSE, which means that the dataset has been erased. Consistently, in Fig. 2.1, it is also seen a drop in the total count number of files in PIC-INJECT, suggesting that the files that were replicated have been deleted. Hence, it is possible to consistently add new files to the main rules, while eliminating the files from the source.

After 72 h of continuous running of this workflow, the replication and deletion of ~1000 files of 10 mb with the MAGIC namespace injected into the data lake were recorded Fig. 2.5. Specifically, an increase in the number of files is seen in PIC-DCACHE each 30min, which corresponds to the successful replications of files appearing at PIC-INJECT. The results show consistency in the upload fluctuations of a file and the elimination of a rule, so it can be suggested that the current setup of the ESCAPE DIOS instance is optimal. Thus, our script implements a functional fully automated workflow that could be used for current experiments in the datalake.

Figure 1. Workflow cycle of MAGIC data injection. The workflow presented comprises the following steps: Creation of new data at the non-Deterministic RSE (E.g., PIC-INJECT), Registration of files to replicate, Creation a new dataset or container in rucio if it does not exist, addition of a rucio replication rule to replicate the desired DID (E.g., PIC-DCACHE), addition of a carrier rules pointing to origin RSE (PIC-INJECT) and another to destiny RSE (PIC-DCACHE), and the automatically deletion of both carrier rules, and files at the origin RSE (PIC-INJECT).

Figure 2. Workflow performance. 1) Count the number of files in the RSE PIC-INJECT (blue) (achieved with counting plugin). 2) Number of files resituated in Rucio's catalog (blue). 3) File replication state in the datalake: ok, replicating, stuck (blue, green, and red respectively). 4) Rules generation process according to their organization in files, datasets, or containers (red, green, blue, respectively). 5) Count the number of files in the RSE PIC-DCACHE (blue) (achieved with counting plugin).

As further steps, we would like the configuration of an RSE in Roque de los Muchachos Observatory (ORM) to fully automate with Rucio the MAGIC ORM → PIC data flow. This would be very useful to implement the test dataflow in a realistic network path.

Acknowledgment

This study was supported by the ESCAPE EU H2020 project (http://www.escape2020.eu) that aims to address the Open Science challenges shared by a number of top-class European research facilities: CTA, SKA, KM3Net, EST, ELT, HL-LHC and FAIR.

Views

41,656