The ESCAPE Science Analysis Platform (SAP) aims to provide a gateway capable of accessing and combining data from multiple collections and stages for onward processing and analysis. Researchers from the European Open Science Cloud (EOSC) will be able to identify and stage existing data collections for analysis and tap into a wide-range of software tools, packages and workflows developed by the European Strategy Forum on Research Infrastructures (ESFRIs). Researchers can bring their own customised workflows to the platform and take advantage of both High Performance Computing (HPC) and High-throughput computing (HTC) infrastructures to access the data and execute the workflows.

ESCAPE SAP will provide a set of functionalities from which various communities and ESFRIs can assemble a science analysis platform geared to their specific needs, rather than to attempt providing a single, integrated platform to which all researchers must adapt. ESCAPE SAP users will have easy access to research data and the compute infrastructure and storage available, to seamlessly publish their advanced data products and software/workflows. In addition, they will be able to easily share/query published data and obtain its provenance.

ESCAPE SAP addresses different aspects of data management, by providing components that address different needs in one single place:

- Data Aggregation and Staging: access and combine data from multiple collections and stage that data for subsequent analysis;

- Software Deployment & Virtualization: tools and services to support the virtualization of relevant software packages and pipelines;

- Analysis Interface: tools and services that simplify the porting of customized analysis and processing workflows to the ESCAPE SAP environment;

- Workflows & Reproducibility: map user individual workflows into a common deployment language to deploy them more easily across the heterogeneous and distributed computing infrastructure underpinning the EOSC;

- Integration with HPC and HTC Infrastructures: deploy those workflows on the underlying processing infrastructure.

The users’ needs: Identifying ESCAPE SAP priorities and components

When developing a service, it is crucial to identify what the future users are looking for. ESCAPE SAP will support its users to search for data, select data and available software/workflows and to compute resources, process data, publish/share data, software, and research objects. To enable this, a single sign-on mechanism, giving seamless access to all integrated services, is required. But how should this be accomplished?

To accurately identify the ESFRIs needs, ESCAPE organised a use-case requirements workshop and also launched a survey to identify the services and components for ESCAPE SAP in terms of data properties, computing system properties, and software properties.

The inputs collected are from ESFRIs from astronomical observatories (EGO - Virgo, ESO - Paranal, EST, LOFAR, VLA, CTA), particle colliders (FAIR, HL-LHC) and astroparticle physics instruments (KM3NeT). ESFRIs that correlate data from multiple observatories (JIVE), multiple observations (Asteroseismology) and citizen science (Zooniverse- where ESCAPE Citizen Science Projects are hosted) also came on board.

From this detailed analysis, it was clear that ESFRIs need ESCAPE SAP to:

- Support project data with a variety of sizes;

- Support both data which is Virtual Observatory (VO) compliant such as data from astrophysics observatories as well as experiment/simulation data which are not mapped to the VO yet;

- Integrate visualisation tools and pipelines;

- Support searching of experiments that store simulations;

- Query duplicated data that needs to be supported as roughly half of the experiments duplicate their data storage;

- Support different types of data coming out of the instrument/observatory and the data generated by users.

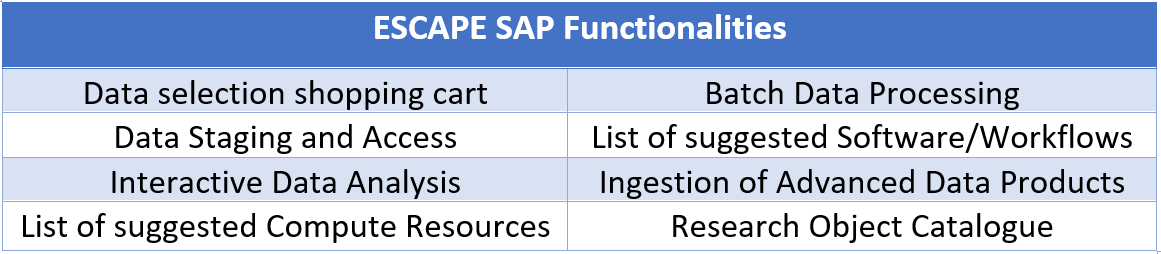

With this, ESCAPE was able to define the functionalities of each service component for ESCAPE SAP, listed in the table below.

An Architectural Design to reduce service complexity

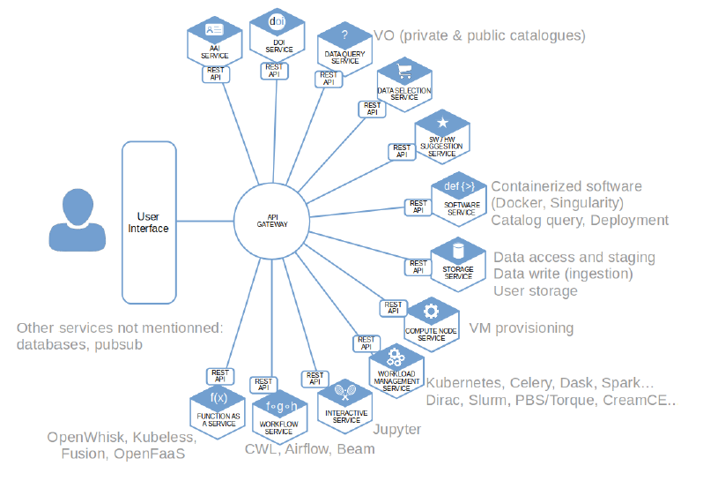

The ESCAPE SAP Architectural Design was defined to easily decouple the development of the different components, while adding and replacing functionalities without compromising its robustness.

The ESCAPE SAP platform will be built following the micro service architectural design: each functionality will be implemented as an independent interconnected service. Independence means that if one service is removed, the others will still be able to process Application Program Interface (API) requests, and the platform will still continue to work, albeit with reduced functionality. The services are interconnected through the API Gateway and communicate with each other through exposed API endpoints to create, read, process and delete resources.

More information about the development of ESCAPE SAP are available on “D5.1 Preliminary Report on Requirements for ESFRI Science Analysis Use-cases” and “D5.2 Detailed Project Plan”.

What lies ahead?

A first initial science platform prototype of ESCAPE SAP, with discovery and data sharing features, is aimed to be ready by July 2020, with an initial deployment set of ESFRI software on this prototype by September 2020. A workshop in November of the same year will test the performance of this prototype.

Data volumes are increasing rapidly, making it more difficult for users to process, analyse and visualise data. The ESCAPE SAP will provide the infrastructure to support data discovery, processing and analysis of data from research infrastructures in a transparent and user friendly way.

KNOW MORE ABOUT HOW ESCAPE IS CONTRIBUTING TO OPEN SCIENCE.

CHECK THE ESCAPE CATALOGUE OF SERVICES

Views

22,526